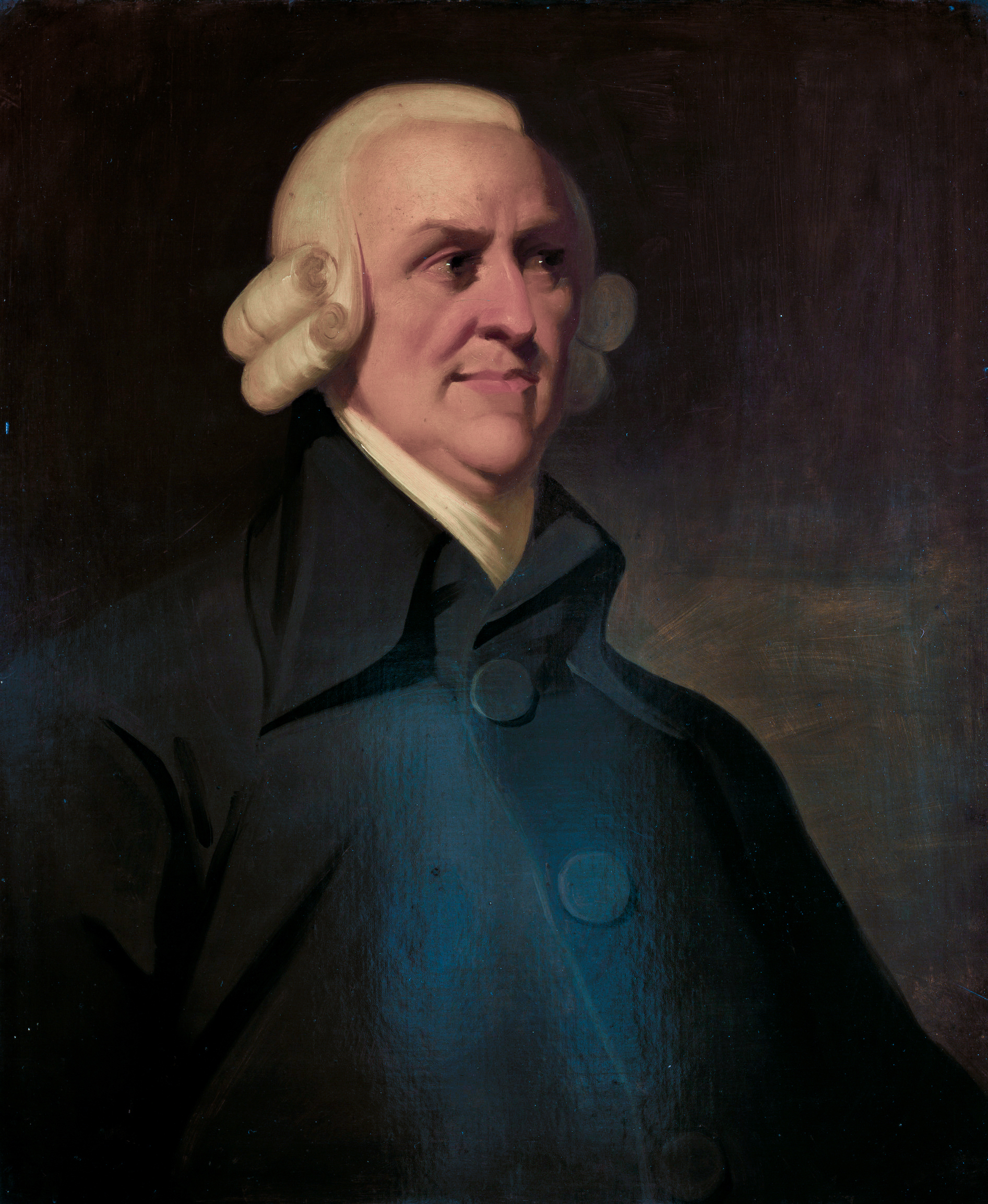

Euclid Of Alexandria

The best known discussion of being is in Euclid - that is to say, from

the schools of Eudoxus and Plato.

There exist infinitely many prime numbers! Is there a more beautiful list of concepts to a Platonist? The proof is simple: Take the least common multiple of a finite set of primes and add one. This new number must only have prime factors not on the list, because anything on the list would have a remainder. Therefore, your old list was too small. By induction on list sizes, one sees the set of primes is infinite.

There exist infinitely many prime numbers! Is there a more beautiful list of concepts to a Platonist? The proof is simple: Take the least common multiple of a finite set of primes and add one. This new number must only have prime factors not on the list, because anything on the list would have a remainder. Therefore, your old list was too small. By induction on list sizes, one sees the set of primes is infinite.

To say “there exist infinitely many” one ought to be able to say

what "there exist" means. In truth, Being is one of the oldest problems

of philosophy, a problem so difficult that some of the greatest

philosophers in history despaired of getting a handle on it.

Aristotle Of Stagira

Perhaps it really was Aristotle who first recognized that what the convincing arguments of Socrates had over the unconvincing pleas of the Sophists was a certain combinatoriality, but certainly Aristotle is credited with “discovering logic” anyway. For thousands of years students have stared glassy eyed as their professors dully intoned the sacred logoi:

- Major Premise: Each man is a mortal.

- Minor Premise: Socrates is a man.

- Conclusion: Socrates is a mortal.

Aristotle was a genius. His examples are always correct, even if

some of his definitions cry out for cleanup. Of course, our copies of Aristotle's works are posthumous lecture notes and it is quite possible that Aristotle wouldn't have been an Aristotelian in the modern sense.

But what may Aristotle have sounded

like if he were a fool?

- Major Premise: Each man exists.

- Minor Premise: Socrates is a man.

- Conclusion: Socrates exists.

Already we are in deep trouble. “Some set of primes is infinite” is

a perfectly well formed sentence in term logic. But there is no

syllogistic proof. Induction cannot be captures syllogistically because

some sequences of true syllogisms are true (the sequence "Is there a number less than n" for n > 2) and some are not (the sequence "Is there a number greater than n" for n > 2). Presumably Aristotle discussed this in the lost "On Mathematics". Equally

troubling, Euclid’s proof is not syllogistic. Valid reasoning about being went beyond Aristotle even before him.

Aristotle was a genius, but not a logician. Aristotle was a

philosopher. His account of being, of existence, of a proof of “Socrates

is a man” was philosophical rather than logical. Aristotle’s account of

being was genetic as well as linguistic. A definition is words that

govern the what it was to be (this is difficult even in the original

greek). Each object is a hylomorphic compound of defined things (some of

which may be themselves hylomorphic compounds). This compounding

“causes” (or “explains”) the object. Ultimately, this chain goes back -

in finitely many steps - to the (possibly unique) unmoved mover

(Aristotle relies on religious faith for the unity).

This is a complicated and tortured account of being, and I have not

touched on one tenth of the subtleties Aristotle analyzes. Gathering

Aristotle into a single, memorable and inaccurate slogan:

TO BE IS TO BE CAUSED!

One could further assume, with the early Donald Davidson, that each

object is uniquely determined by it’s causes. Aristotle himself does

not assume this, at least not as a logical principle. He admits could be multiple

unmoved movers, for instance.

Before moving on from Aristotle, let’s look at a semi-Aristotelian account of the standard syllogism.

- Major Premise: Each man is a mortal.

- Each man is a complex. Each complex is hylomorphically composed of elements. Each man is hylomorphically composed of elements.

- No thing composed of elements out of their sphere is immortal. Each thing hylomorphically composed of elements is a thing composed of elements out of their sphere. No thing hylomorphically composed of elements is immortal

- No thing hylomorphically composed of elements is immortal. Each man is hylomorphically composed of elements. No man is immortal.

- Minor Premise: Socrates is a man.

- Each thing with a rational soul is a man. Socrates has a rational soul. Socrates is a man.

- Conclusion: Socrates is a mortal.

Of course, one could continue to make finer and finer subarguments until - Aristotle believes - one hits the bottom. Aristotle further has very strong opinions on what the bottom will look like, but I pass over the remainder.

Santo Tommaso d'Aquino

I can not and will not give an account of post Aristotelian

theories of being. I will say that Aristotle’s followers did not follow

even his carefully wrought example. They tried to reason about existence

as a predicate like “a man” or “a mortal” - “a being”.

Immanuel Kant

Immanuel Kant told woke us from our sub-Aristotelean slumbers. He

told us “Existence is not a predicate!” or more verbosely “Being is

evidently not a real predicate, that is, a conception of something which

is added to the conception of some other thing. Logically, [Being] is

merely the copula of a judgement”.

This can be illustrated by considering an incoherent description,

such as the false geometric sentence “ABCD is a round square.”. No such

shape exists, on pain of contradiction. Consider the following sentence

of sophistical geometry “ABCD is an existent round square.”. On pain of

contradiction, such a round square must exist but also on pain of

contradiction the shape must not exist. Sophistry is sophistry after

all.

As wise as Kant’s argument is, his analysis has certain flaws. One

is that it conflates two parts of a sentence: the quantifier and the copula. The extremely simple structure of a term logic encourages this

confusion. Every sentence in term logic has exactly four distinct parts:

one quantifier, one subject, one copula and one predicate. If one was

simply careful about order, the copula could be dropped (since it never

changes).

A more fundamental flaw is that the argument is essentially

negative. It tells us what being is not but little about what being is.

Kant’s argument read positively is essentially a gloss on Aristotle’s

logical/genetic hybrid conception of being.

The same is true of post-Kantian philosophy. Schopenhauer, for

instance, relies explicitly on Aristotle’s genetic/logical hybrid to

give his account of being (or as he phrases it, his “root of the

principle of of sufficient reason”). Hegel, for another instance, tries

to purify being into a purely genetic account.

William Shakespeare

Euclid’s argument shows we ought not leave well enough alone.

Shakespeare (a former classics teacher) was a famous fan of modern

philosophy. The Bard was certainly thinking of Aristotle when he said

“There is more on Heaven and Earth than dreamt of in your

philosophy...”.

I can not and will not cover the history of being in

post-Aristotelean philosophy. Instead, I will simply outline a Quinean

theory of being. I will begin by introducing a short begriffsschrift.

Jan Łukasiewicz

Let

\[ R_n^m \]

Be a relation with arity of n (or 0 is n is blank). The symbol m is either blank or a numeral. This gives us us a potentially infinite list of relations to choose from. I use the Łukasiewicz or “Polish” style. In addition to removing lots of needless syntactical blathering about parentheses, this notation forges a direct connection between relation syntax and trees. As syntactic sugar, I allow other capital letters to represent relations as well.

The variables/constants of relations will be denoted by lower case letters \( x_i \) where \(i\) is a number. As further syntactic sugar, I allow other lower case letters as well.

There are now many ways of writing “Socrates is a man.”. For instance,

\[ M_1s \]

Where \( M_1 \) is a 1-ary relation (a predicate) “is a man” and \( s \) is the constant Socrates. Closer to Kant’s analysis is

\[ E_2sm \]

Where \( E_2 \) is the coupla relation, s is the constant Socrates and m is the constant Man. That is, Socrates is in the set of men (see Quine’s New Foundations and Mathematical Logic for his analysis of coupla as set membership).

Augustus De Morgan

The "logical constants", so called because they don’t change their meaning across different theories, are particularly important relations. Examples of logical constants are: and, or, not, material implication and logical equivalence. I will denote these by \( A_2xy \), \(V_2xy\), \(N_1x\), \(T_2xy\) and \(L_2xy\). The syntax here is quite intuitive.

The modern idea of the logical constant dates to the followers of the algebraist George Boole: Jevons, De Morgan, Venn. Interestingly, Boole’s own conception was quite different.

As an example, let’s look at congruence in geometry. This relation - \( C_2 \) - is an equivalence relation. This means that

- Reflexivity: For all \( a \), \(C_2aa\)

- Symmetry: For all \(a,b\), \(T_2C_2abC_2ba\)

- Transitivity: For all \(a,b,c\), \(T_2A_2C_2abC_2bcC_2ac\)

TO BE IS TO BE THE VALUE OF A VARIABLE

That is: Łukasiewicz notation suggests objects as first class citizens and relations as hylomorphic combinations of object. David Lewis, for instance, endorses this reading in his defenses of both modal realism and Humean Superveience. The root of this is an analogy between a constant \( s\) for Socrates and a variable \(x_i \).

Hermann Weyl

Łukasiewicz notation is beautiful, but it has some unfortunate aspects. I will name two. First, it isn't easy to understand this analogy between constants and variables. Second is that identity of argument is denoted syntactically by identity of variable.

This syntatical choice doesn’t only introduce unnecessary verbosity - the relation in transitivity has only three variables but six places for them to enter - but also creates “scope” ambiguity. It is perfectly licit to let \(x_i\) mean different things in different parts of a sentence, but not always.

To get rid of scope, we will use functors which map relations to relations. The general idea of a “variable free” notation was originally invented by Weyl. Unfortunately, his notation was a bit clumsy and was profoundly uninfluential. A very powerful variable free logic with an attractive begriffschrift called “Combinatory Logic” was introduced by the unfortunate Moeshe Schoenfinkel.

The functors we will use were christened “predicate functors” by Quine. I will denote these with boldface letters. These break up into three groups. The simplest are the variable permuters:

- Major Inversion: \( L_2\mathbf{maj} R_n^p x_1 ... m_nR_n^p x_n x_1 ... x_{n-1} \)

- Minor Inversion: \( L_2\mathbf{min} R_n^p x_1 ... m_nR_n^p x_1 ... x_n x_{n-1} \)

- Reflection: \( L_2\mathbf{ref} R_n^p x_1 ... m_{n-1}R_n^p x_1 ... x_{n-1} x_{n-1}\)

The next group are the ‘logical’ or connective functors

- Negation: \(L_2\mathbf{neg} R_n^p x_1 ... x_nN_1R_n^p x_1 ... m_n\)

- Cartesian Product: \(L_2\mathbf{car} R_n^p R_m^q x_1 ... x_n y_1 ... y_mA_2R_n^p x_1 ... x_nR_m^q y_1 ... y_m\)

- Derelativization: \(\mathbf{der}R_n^p x_1 ... x_{n-1}\) if and only if there is something x_n such that \( R_n^p x_1...x_n \)

We take as an example the congruence relation \( C_2 \) in geometry. It is an equivalence relation, that is

- Reflexivity: \(\mathbf{neg \ }\mathbf{der \ }\mathbf{neg \ } \mathbf{ref} C_2\)

- Symmetry: \(\mathbf{neg \ }\mathbf{der \ }\mathbf{neg \ }\mathbf{neg \ }\mathbf{der \ }\mathbf{neg \ }\mathbf{maj \ }\mathbf{ref \ }\mathbf{maj \ }\mathbf{maj \ }\mathbf{ref \ }\mathbf{car \ }C_2\mathbf{neg \ }C_2\)

- Transitivity: \(\mathbf{neg \ }\mathbf{der \ }\mathbf{neg \ }\mathbf{neg \ }\mathbf{der \ }\mathbf{neg \ }\mathbf{neg \ }\mathbf{der \ }\mathbf{neg \ }\mathbf{maj \ }\mathbf{ref \ }\mathbf{maj \ }\mathbf{ref \ }\mathbf{maj \ }\mathbf{ref \ }\mathbf{maj \ }\mathbf{min \ }\mathbf{neg \ }\mathbf{car \ }\mathbf{neg \ }\mathbf{car}C_2C_2\mathbf{neg}C_2\)

Quine's method gives us a new slogan, in many ways superior to the first two:

TO BE IS TO DERELATIVISE A RELATION!

This slogan has certain advantages. First of all, the notion of a "variable" is secondary to the notion of a relation ("There exists an x such that x" is word salad). Next, perplexities of scope, quantification and so forth are absorbed into the algebraic properties of the functors. But the most important advantage is that this definition easily generalizes into a modern algebraic theory not tied to special assumptions about first order logic.

William Lawvere

Categorical semantics is a vast generalization of the preceding section, a generalization which is Weyl in neither sense of the phonemes. Introduced by Lawvere in a paper so popular that I have heard him complain "You know that I have written other things, right?", the concept is essentially to abstract the predicate functors while preserving the structure.

Major Inversion and Minor Inversion, for instance, are just ways of capturing the concept of a permutation group. The Cartesian Product is a way of getting, well dang products not a big shock there. These can obviously be generalized to groups and products in general.

What about the "all important Derelativization operator"? Well there is a general reading very natural in category theory that I have yet to translate into simple words. We have category with finite products. Some functor F from this to the category of truth values. Let X be the type of some variable. Derelativizaiton is a particular case of a left adjoint of the natural transformations from F(X x -) to F(-) (i.e. it gets rid of one variable of some type by returning a truth value).

If the "category of reality" (perhaps a sort of category of relations) has an initial object (perhaps the empty relation), then existence has a less natural but conceptually simpler gloss: each morphism from an initial object is denotes an object.

These briefs notes of course don't amount to anything like a history of theories of being. But it's really very simple, as the fellow says

There is nothing you can see that is not a flower;

There is nothing you can think that is not the moon.